Intelligence is prediction. And it comes in two flavors: intuitive and reasoning. AGI is intuitive.

Update April 8: since twitter is now suppressing links to substack, I’ve cross posted a copy of this blog post to my older wordpress site here, so I can link to it easily on twitter. Not sure what I’ll do long term, but for now I’ll cross post to both sites.

Jeff Hawkins’ book On Intelligence, written with co-author and science writer Sandra Blakeslee, radically changed my understanding of how the brain works and what Artificial General Intelligence (AGI) is. I rank it alongside Pinker’s Language Instinct and Dawkins’ Selfish Gene. The highest praise I can give. Of course that’s an idiosyncratic view. For many, Jeff Hawkins is better known for the palm pilot. Not as a brain and AI researcher. But I believe the view of intelligence laid out in On Intelligence in 2004 and A Thousand Brains in 2021 is correct. Last week Ben Thompson used Hawkins’ views to discuss how the Wolfram|Alpha plugin can stop Large Language Models (LLMs) like ChatGPT from hallucinating math errs. And yes. Hallucinate is now a technical term of art in AI research. ChatGPT doesn’t spew bullshit. It hallucinates! What I want to argue is Hawkins’ view of intelligence as prediction is the best way to understand AGI full stop. So let’s dig in.

Neocortex is modular with a single algorithm

Hawkins makes two central claims: 1) the key to understanding human intelligence is the neocortex, which is built on a single replicated algorithm able to create predictions from any type of sensory input, and 2) intelligence is prediction.

Neocortex first. Hawkins notes that Einstein created his theory of special relativity by accepting at face value the truly odd implications of the speed of light being constant in all reference frames. And:

There is an analogous discovery in neuroscience—a fact about the cortex that is so surprising that some neuroscientists refuse to believe it and most of the rest ignore it because they don’t know what to make of it. But it is a fact of such importance that if you carefully and methodically explore its implications, it will unravel the secrets of what the neocortex does and how it works. In this case, the surprising discovery came from the basic anatomy of the cortex itself, but it took an unusually insightful mind to recognize it. That person was Vernon Mountcastle, a neuroscientist at Johns Hopkins University in Baltimore. In 1978 he published a paper titled “An Organizing Principle for Cerebral Function.” In this paper, Mountcastle points out that the neocortex is remarkably uniform in appearance and structure. The regions of cortex that handle auditory input look like the regions that handle touch, which look like the regions that control muscles, which look like Broca’s language area, which look like practically every other region of the cortex. Mountcastle suggests that since these regions all look the same, perhaps they are actually performing the same basic operation! He proposes that the cortex uses the same computational tool to accomplish everything it does.

And

When I first read Mountcastle’s paper I nearly fell out of my chair. Here was the Rosetta stone of neuroscience—a single paper and a single idea that united all the diverse and wondrous capabilities of the human mind. It united them under a single algorithm. In one step it exposed the fallacy of all previous attempts to understand and engineer human behavior as diverse capabilities. I hope you can appreciate how radical and wonderfully elegant Mountcastle’s proposal is. The best ideas in science are always simple, elegant, and unexpected, and this is one of the best. In my opinion it was, is, and will likely remain the most important discovery in neuroscience. Incredibly, though, most scientists and engineers either refuse to believe it, choose to ignore it, or aren’t aware of it.

Talking about AI in 2023 we’d frame Mountcastle’s insight as scale. The neocortex replicates the same algorithm over and over and over. So don’t try to create AI using a complicated bespoke system. Instead take a small number of building blocks like convolution nets, embedding, attention, transformers, and scale them up massively with a trillion tokens and terrabytes of text training.

Intelligence is prediction

Onto prediction. What’s Hawkins’ paradigm for intelligence? Intelligence is prediction. This sounds trite. But it’s not. It cuts through a philosophical wasteland of qualia, chinese rooms, consciousness, turing tests, blah blah blah. Philosophers can argue in their dorm rooms all they want. As a pragmatic matter, defining intelligence as prediction works for brains and AGI. Hawkins:

Your brain has made a model of the world and is constantly checking that model against reality. You know where you are and what you are doing by the validity of this model. Prediction is not limited to patterns of low-level sensory information like seeing and hearing. Up to now I’ve limited the discussion to such examples because they are the easiest way to introduce this framework for understanding intelligence. However, according to Mountcastle’s principle, what is true of low-level sensory areas must be true for all cortical areas. The human brain is more intelligent than that of other animals because it can make predictions about more abstract kinds of patterns and longer temporal pattern sequences. To predict what my wife will say when she sees me, I must know what she has said in the past, that today is Friday, that the recycling bin has to be put on the curb on Friday nights, that I didn’t do it on time last week, and that her face has a certain look. When she opens her mouth, I have a pretty strong prediction of what she will say. In this case, I don’t know what the exact words will be, but I do know she will be reminding me to take out the recycling. The important point is that higher intelligence is not a different kind of process from perceptual intelligence. It rests fundamentally on the same neocortical memory and prediction algorithm.

I’m deliberately using paradigm here as in Thomas Kuhn’s book The Structure of Scientific Revolutions. Science progresses in two distinct ways. Normal science takes an existing scientific paradigm and solves puzzles. In the Ptolemaic paradigm, the sun went around the earth. The puzzle of retrograde planetary motion was solved using epicycles. The Copernican Revolution created a new scientific paradigm where all the planets went round the sun. This meant that whenever the earth lapped slower planets orbiting farther out, say Jupiter, they’d appear to go backward (retrograde) relative to the stars. Anomaly resolved.

What happens if you don’t even have a paradigm? Kuhn says your observations are unmoored to theory, so in the strict sense are not scientific at all. They are pre-scientific. When I say Hawkins’ 2004 book is a classic (despite some flaws), this is why. Hawkins offered a straightforward paradigm for intelligence as prediction that cuts through a lot of bullshit about consciousness. I’d say Hawkins’ views fall into the category of what’s called predictive processing. But Hawkins is where I first learned about it, and why his writing struck me with such force.

More bluntly, what I’m saying (following Hawkins) is brain research has been caught in a pre-paradigm rut for decades. Or to be more accurate and charitable each research team has their own paradigm. But that’s not really science. The Copernican revolution for intelligence research will be when we align on a common paradigm of intelligence. Intelligence as prediction fits the bill. It can naturally fold other ideas such as baysian brains underneath it. And as far as I can tell, the LLM machine learning community has now reached a similar conclusion that intelligence is prediction. Albeit by following their own very independent path. This is the way.

Predictive abilities can be narrow or broad. Is a plant intelligent by this definition? Sure. It anticipates where the sunlight will be and grows directionally towards it. Does a chess playing computer have a type of limited intelligence? Yes. It predicts the consequences of moves on the board. When a person predicts the sun will come up tomorrow, that’s more generalized because it requires a complex model of the world. Someone who anticipates nearly everything you say has an even more sophisticated predictive model. We call that being smart.

This view of intelligence as prediction has elegance because it’s agnostic as to how predictions get made. Brains, computers, rule based, bayesian, machine learning, whatever. In fact, Hawkins has updated his views since his first book. From A Thousand Brains:

My previous book, On Intelligence, explored this idea of learning and prediction. In the book, I used the phrase “the memory prediction framework” to describe the overall idea, and I wrote about the implications of thinking about the brain this way. I argued that by studying how the neocortex makes predictions, we would be able to unravel how the neocortex works.

Today I no longer use the phrase “the memory prediction framework.” Instead, I describe the same idea by saying that the neocortex learns a model of the world, and it makes predictions based on its model. I prefer the word “model” because it more precisely describes the kind of information that the neocortex learns. For example, my brain has a model of my stapler. The model of the stapler includes what the stapler looks like, what it feels like, and the sounds it makes when being used. The brain's model of the world includes where objects are and how they change when we interact with them. For example, my model of the stapler includes how the top of the stapler moves relative to the bottom and how a staple comes out when the top is pressed down. These actions may seem simple, but you were not born with this knowledge. You learned it at some point in your life and now it is stored in your neocortex.

The brain creates a predictive model. This just means that the brain continuously predicts what its inputs will be. Prediction isn't something that the brain does every now and then; it is an intrinsic property that never stops, and it serves an essential role in learning. When the brain's predictions are verified, that means the brain's model of the world is accurate. A mis-prediction causes you to attend to the error and update the model.

We are not aware of the vast majority of these predictions unless the input to the brain does not match. As I casually reach out to grab my coffee cup, I am not aware that my brain is predicting what each finger will feel, how heavy the cup should be, the temperature of the cup, and the sound the cup will make when I place it back on my desk. But if the cup was suddenly heavier, or cold, or squeaked, I would notice the change. We can be certain that these predictions are occurring because even a small change in any of these inputs will be noticed. But when a prediction is correct, as most will be, we won't be aware that it ever occurred.

His current view is that each of the roughly 150k cortical columns in the neocortex creates its own predictive model based on reference frames. And those models vote on the reality we perceive. In some sense, we live less in reality than in our brain’s predictive model of reality. On Intelligence has a sole focus on intelligence as prediction. So though out of date is perhaps a better read than A Thousand Brains, which sprawls across brain theory, limits of machine learning, AGI, AGI risk, machine intelligence. I strongly recommend both books of course.

In any case, we’re done with Hawkins for now. What I want to do next is take his paradigm and run with it.

General Intelligence is common sense intuition

Frederick Jelinek is famous for his circa 1990 quip: “Every time I fire a linguist, the performance of the speech recognizer goes up". Jelinek is often quoted because for decades the statistical machine learning approach to AI has trounced symbolic logic.

And it’s not just AIs that favor intuition over reason. In sports people visualize to improve. They imagine how shooting a basketball through the hoop will look and feel. They imagine being Steph Curry. Then they shoot the ball. This mental rehearsing is not about calculating the Newtonian dynamics of forces (the symbolic logic approach). It’s about priming intuition.

While LLMs and brains work differently, what they have in common is both are ultimately built atop predictive intuitions. LLMs get built at their lowest level from predicting the next word. People have far more sensory input and complexity, but like LLMs, we have no single self consistent logical theory behind our beliefs. Our intuitive predictions are ad hoc heuristics all the way down. LLMs work by mapping concepts into a roughly 10k dimensional embedding space, which turns concepts into high dimensional thought vectors. And there appear to be intriguing parallels between how our own brains map information into sparse, high dimensional models. But for our purpose today, we don’t care about implementation.

What LLMs have demonstrated is that just like brains, general intelligence for a domain is built from predictive heuristics and intuitions. These intuitions need not be legible or logically consistent. In fact by their very nature they’ll be just the opposite. Illegible and inconsistent. We know what people call their intuitions: common sense. AGI is just generalized intuitive predictions. A type of common sense.

A side effect of our intelligence being built atop intuitions is they can go wrong. When LLMs make things up we call it hallucination. Humans have this problem too. In fact, people’s intuitions can conflict even when looking at an identical image. Recall the 2015 internet meltdown where people discovered the dress below was seen by some as black and blue. By others as white and gold.

I’m team white and gold. But your intuition may whisper a different truth.

Reason is built atop a substrate of common sense

We just finished glorifying common sense as General Intelligence. So where does that leave our dear lost friend reason? Psychology has a suggestion.

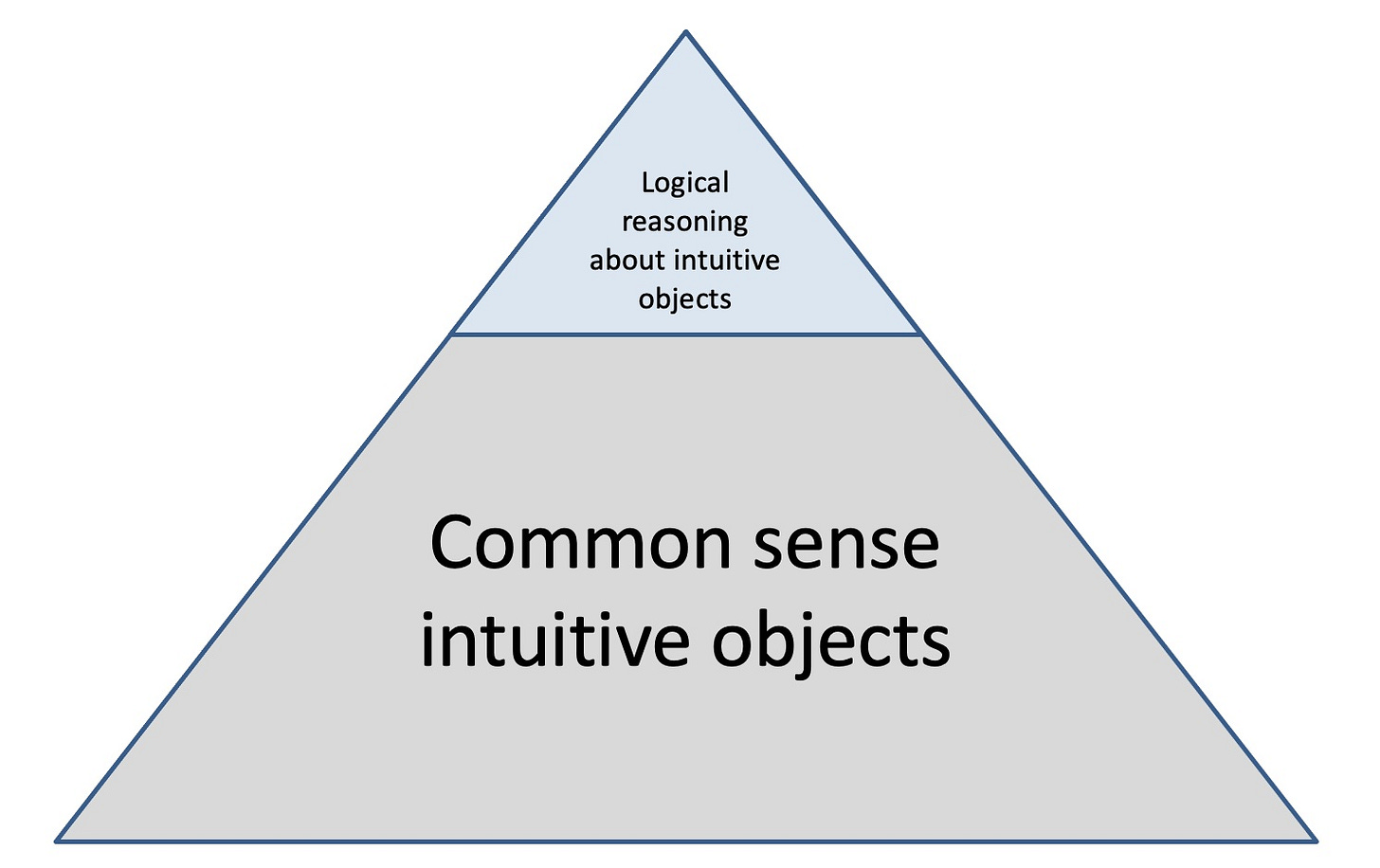

In his book Thinking, Fast and Slow, Daniel Kahneman describes two ways the brain thinks. System 1 is fast, automatic, emotional, unconscious, intuitive. Things like walking. Or seeing the color of a dress. What I’ve been calling common sense intuitions. It’s there at the bottom of the triangle below. System 2 is slow, effortful, conscious, logical. Deciding if I should do my taxes. Where to go on vacation. It’s how we ponderously reason. At the top of the triangle.

AI research has historically fought over whether statistical or rule based approaches are best. Purely rule based systems tend to be finicky and brittle. For example in the first self driving car DARPA challenges, none of the cars finished. But as statistical models began to dominate, self driving improved rapidly. Though it’s not there yet.

Statistical and rule based approaches to AI are not competitors. They’re complements. You can’t reason unless you have something to reason about. Logical reasoning has as its subject matter our common sense understanding of the world. Common sense is our general intelligence. Logical reasoning is brittle when unmoored from it. Of course in practice all attempts at AI have been hybrid mixtures of rules and statistics. But these were bespoke creations. What brains are telling us is once you have AGI with common sense intuitions, integrating analytic reasoning on top is straightforward.

To be clear, in brains and LLMs, there’s coupling between reasoning and intuition, because reasoning feeds back into improved intuitions. And vice versa. But conceptually it’s important to treat these as separate capacities which interact, not conflate them as a single unified AGI shoggoth.

For brains, scientific paradigms are logical structures grounded in common sense empirical reality. Otherwise they get lost in idle speculation and hallucination. You know. Like string theory.

With LLMs, logical reasoning can come from plug-ins like Wolfram|Alpha. Which fixed ChatGPT’s math hallucinations. You can think of ChatGPT using a plug-in as analogous to a human using a computer. But it goes far beyond that. It’s more like The Matrix. Neo gets an upload of knowledge with instant virtual training. And suddenly he looks over and says “I know Kung Fu". It’s spooky. Plug-ins upload a set of logical reasoning directly into LLM brains. Why bother with tedious studying like a mere human. Just bam! Now you know kung fu.

Intelligence is prediction. But it comes in two flavors. General intelligence from intuitive predictions, based on massive data training. Narrow intelligence from logical reasoning. LLMs can plug-in custom modules of narrow logical reasoning directly on top of their intuitive AGI.

Taking the correct lessons from biology

Many people have the intuition smart brains are extremely difficult for nature to evolve. Even though intelligence has evolved independently on multiple branches of life. Mammals have a neocortex, but birds evolved the dorsal ventricular ridge. And “these brain regions are constructed much like our neocortex, with both layerlike and columnar organization”. Invertebrate smart octopus have even less mammal brain congruity, but the vertical lobe appears to have similar functional capability to our neocortex.

Which begs the question. If smarts come from scaling up a generalized algorithm, shouldn’t everything be smart? Well. Sometimes your brain’s intuition makes stuff up. What did we call just it? Ah yes, hallucination. Your intuition that evolution always favors big brains is an egotistical hallucination. You have to remember under malthusian conditions starving to death is commonplace. For adults, our brains take 20% of our calories. But in “the average 5- to 6-year-old, the brain can use upwards of 60% of the body's energy”. And of course historically “around one-quarter of infants died in their first year of life and around half of all children died before they reached the end of puberty.” If you want a realistic (if gruesome) intuition as to what nature thinks about big brains, picture half of all big-brained 5 year olds starving to death.

Let’s prime your intuition further. Which way of spending precious calories provides better reproductive fitness: Gigachad muscles or a PhD? If you have earned a PhD (congratulations!) and it’s blocking your common sense from guessing the right answer, click here.

Biological creatures evolve a drive to reproduce. But AGI in raw form is just a prediction engine. No more. No less. If we want to add agency or emotional drive to it we could. But that would requires additional dedicated work. AGI is fundamentally amoral. A tool. Like any other technology. AI alignment should worry about humans doing bad things with the tech. And add guardrails against it.

What biology tell us is a) General Intelligence is foundationally about intuitions, not logical rules, b) the design space is large, c) alignment is about preventing human misuse, d) AGI needs massive scale to work, e) intelligence is a friggin’ power hungry monster. Our first inefficient attempts at building AGI on A100 GPUs are burning power white hot brighter than the sun. For living creatures, thinking has always been energetically expensive. AGI will be no different.

Summary

Intelligence is prediction. I believe that’s becoming the consensus paradigm for defining intelligence. If you disagree, fine. But offer your own. Or risk getting lumped in with pre-scientific stamp collectors. People who mock LLMs because all they do is predict the next word are ironically endorsing LLMs as intelligent. Even if they aren’t intelligent enough to realize this.

Predictive intelligence comes in two flavors: general intuitive intelligence, and narrow reasoning intelligence. Intuitive AGI in computers is analogous to intuitive common sense in humans.

For both computers and living things, general intelligence is an ad hoc mass of predictive heuristics and intuitions. These intuitions need not be legible or consistent. In fact, making intuitions legible and consistent requires a lot of hard work. Sometimes we call this science.

Logical reasoning’s natural dwelling place is atop a base of intuitive common sense. Now with intuitive LLMs we finally have our AGI cake. Logical reasoning is the frosting. Plug-ins layer that frosting onto LLMs.

Biology does not support the idea that AGI must be difficult. What biology tells us is intelligence has a large design space. And can be created by replicating a single predictive modeling algorithm to mass scale. And most importantly AGI will remain energetically expensive far into the future.

AGIs are amoral prediction engines. That means AI alignment should focus on guardrails to prevent human misuse. Not skynet taking over.

LLMs are a true start towards AGI. How do we know? Because at least in the domain of words, LLMs have a broad based (common sense) intuition about how words fit together. Just like human brains.

As far as I can tell everything above is already non-controversial with many AI researchers. Though obviously this list is speculative. But if it holds, my intuitive prediction is a few years from now this list will appear incredibly boring and self evident. Didn’t we always believe this? It’s common sense! Our future paradigm for AGI is already here. Just not evenly distributed.

Okay you made me laugh out loud with that dig at string theory Great job One of my favorite posts of 2023. Everything I read was perfectly clear fantastic.